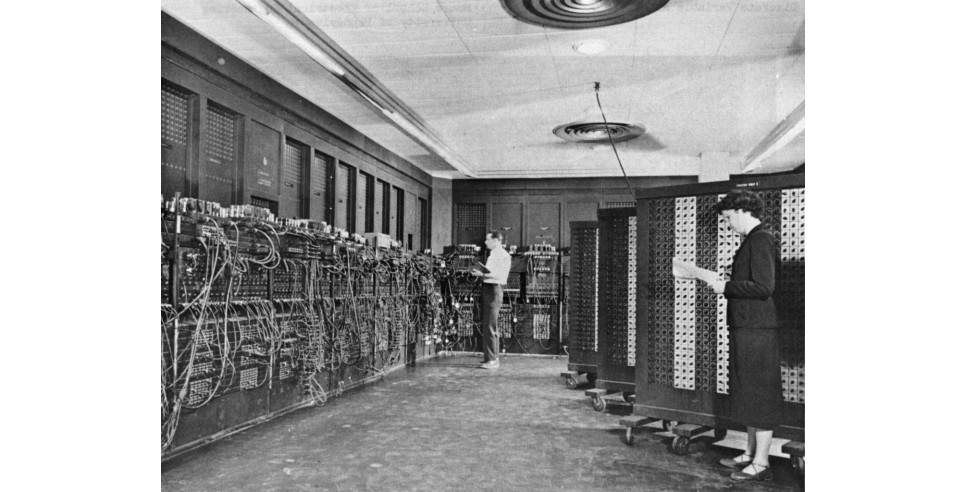

Early stage computers, government projects, were designed to calculate arithmetic functions at a more rapid speed than humans could perform on paper or in their heads, save for a few savants with amazing math skills. Computations at rapid pace, thus the name computers. The first US Government computer was an acronym, ENIAC. Electronic Numerical Integrator and Computer.

ENIAC was enormous. Bigger than any one room, it was positioned in a 50-by-30-foot basement. The massive structure contained 40 panels U-shaped, along three walls. Each unit was roughly 2 feet wide by 2 feet deep by 8 feet high. And it required a lot of power! With ±18,000 vacuum tubes, 70,000 resistors, 10,000 capacitors, 6,000 switches, and 1,500 relays, ENIAC was the most complex electronic system ever built. ENIAC ran continuously, in part to extend tube life, generated 150 kilowatts of heat, and could execute up to 5,000 addition problems per second, several orders of magnitude faster than its electromechanical predecessors. Begun as a WWII project, it was completed by February 1946, at a cost to the government of $400,000. The war was over. But the US led the way into computing and computers. ENIAC was used in the development of the HydrogenBomb.

The humongous computers were followed by the room-size main frames. Univac and IBM, HP, Honeywell, Control Data, and others served the needs of major enterprise with large mainframes. Along with those mainframes came software jobs, engineering and technical staffing needs. And an immediate and permanent need to air condition (cool) the computer rooms. And, oh yes, huge power bills.

Ten years ago the EPA published a report to Congress on Data Center and Server Energy Efficiency. The August 2007 EPA report found that data centers in the United States used about 1.5% of all electricity consumed in the country. That data usage had doubled in the prior five years. The EPA warned that the data usage would double again should the then-current data center power consumption trends continue. The EPA encouraged server vendors (IBM, HP, others) to publish typical energy usage numbers to assist their customers in making informed decisions based on energy efficiency. IBM said its mainframe gas gauge, part of its Project Big Green was part a $1 billion investment announced in May, 2007 to increase the efficiency of IBM products. This story received incisive coverage in Network World, a key trade journal read by data center and main frame professionals.

Today the US Government weighs in even further on this topic. The EPA, at its website, lists Energy Star guidelines for saving energy, using less power and being more efficient with your home computer equipment. There’s also an Energy Star buying guide considerations page.

Desktops are slowly being replaced by laptops and tablets. Some users even abandon both for smartphones. Odd though this may sound, a colleague of mine wrote two books on his Blackberry, the first on an old Blackberry, and the second on a Blackberry smartphone.

Laptops and tablets eat up fewer watts on your electric bill You can charge the batteries and extend usage without plugging in to an outlet. Portability also frees up usage and makes computing ubiquitous.

A White Paper published in 2006 found significant computer energy and electrical savings between desktops and laptops. It reviewed studies conducted over the previous five year period. Looking just at the PC versus Laptop numbers, we learn that sixteen years ago laptops required between 12W and 22W when active, between 1.5W and 6W in low power, and between 1.5W and 2W when turned off if the battery is fully charged. That’s fewer watts to pay each month on your monthly electric bill.

This study calculated that on average laptops require 15W when active, compared to 55W for active desktops, 3W in low power mode, compared to 25W for desktops in low power mode, and 2W when turned off, compared to 1.5W for desktops which are off.

Another study from fifteen years ago found similar results; laptops requiring 19W when active, 3W in low power mode; yet that study concluded that desktop computers used 70W when active, 9W in low power.

These days a gaming laptop draws more power than a standard laptop, due to speedier and more demanding processing and graphics. Either way, for the most part, laptops are more efficient than desktops. Tablets more efficient than laptops. Phones more efficient that tablets.

Many of the Internet of Things devices enable users to turn connected devices on or off remotely. Heat or air conditioning are the obvious first ideas. Smart sensors can be used to automatically react, or to alert a user or machine for response. A sponsor could detect the movement or gestures of a person who has fallen asleep, and would then automatically turn off the TV after a period of time. Heart and glucose monitors could send updates to health care monitoring services which would be programmed to transmit alerts in the event of numbers or events of concern. Any variety of health monitors fit this example.

Imagine in the not-too-distant future, via IoT technology and software, your home –or maybe your second or vacation home-- being in touch, via the cloud, with the smart energy (electrical) grid. Your home system software, or simply the generated data, could inform the power utility company that there has been no activity in the home for a while. The water heater there can be turned off remotely, by the power company via the smart grid and your interactive IoT software, and you wouldn’t know it. The energy savings would occur on your behalf.

These are energy saving activities, as well as beneficial economically.

This just scratches the surface. Computers have gone from massive and gigantic, to hand held devices with more processing power than those behemoths of the 1940s and 50s. Power grid consumption gets more efficient as the machinery slims down. The digital future will be even more adroit, more rewarding.

Dean Landsman is a NYC-based Digital Strategist who writes a monthly column for PR for People “The Connector.”